Stay Ahead of the Curve

Latest AI news, expert analysis, bold opinions, and key trends — delivered to your inbox.

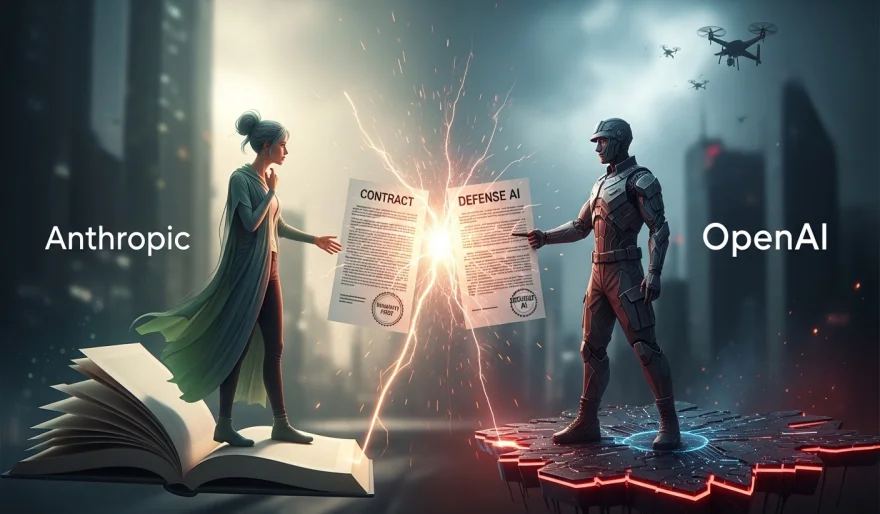

Anthropic CEO Accuses OpenAI of Misleading Messaging Over Pentagon Deal

3 min read Anthropic CEO Dario Amodei has publicly criticized OpenAI’s communication around its U.S. Department of Defense agreement, calling the company’s messaging “straight up lies,” according to reports. The dispute follows OpenAI’s announcement of a military partnership shortly after Anthropic stepped back from similar talks due to ethical concerns. The controversy highlights growing tensions in the AI industry over government collaborations, transparency, and safety standards. March 05, 2026 15:49

The rivalry between leading AI labs has escalated after Anthropic CEO Dario Amodei publicly accused OpenAI of misrepresenting details around its recent U.S. Department of Defense agreement.

According to reports, Amodei described OpenAI’s public framing of the military deal as “straight up lies,” arguing that the company’s messaging does not accurately reflect the nature of the contract or the safeguards involved.

What triggered the dispute?

Anthropic had previously engaged in discussions with the U.S. Department of Defense but ultimately stepped back, citing concerns about how its technology could be used — particularly around sensitive applications such as autonomous systems and large-scale surveillance.

Shortly after, OpenAI announced its own agreement with the Pentagon, stating that the partnership includes technical safeguards and aligns with its safety principles. That announcement appears to have intensified tensions between the two companies.

A deeper philosophical divide

Beyond the public accusations, the disagreement highlights a broader split in the AI industry:

-

One approach prioritizes strict ethical boundaries and limited military engagement.

-

The other emphasizes structured collaboration with government agencies under defined safety controls.

Amodei’s comments suggest frustration not only with the contract itself, but with how the agreement was communicated to the public and employees.

Why this matters

As AI systems become more powerful and integrated into national security infrastructure, partnerships with defense agencies are becoming increasingly common. However, transparency, safety guarantees, and public trust remain highly sensitive issues.

This clash underscores a growing question in the AI industry:

How should frontier AI companies balance innovation, commercial opportunity, and military collaboration — without eroding public confidence?

AI Agents

AI Agents